I come from an ‘on-premise’ background. I’ve spent years in organisations with on-premise systems such as Dynamics 365. Take me into a server room that’s alive with whirring fans, and I get quite nostalgic. Those were the days…well, in some ways, anyhow. But having recently discovered some quite helpful functionality, I thought I’d share it with others!

See, when it came to Dynamics 365 security, there was no way to automate things. Yes, users had to be created in Active Directory (and also, in a folder that the Dynamics install could refer to within AD!), but they had to be manually added to Dynamics 365. There was no way to automate this (from recollection – then again my memory grows dim with the fog of time).

So what the system administrators needed to do was to manually go to Settings/Security within the system, and there they could either add a single user at a time, or multiple users. They would then assign role/s (for multiple users, all of the users would need to have the same role/s – it wasn’t possible to modify individual users within this process).

One way to slightly speed up time in handling different security roles was to have teams, relating to the business needs. The security role/s would be created, assigned to a team, and then any user added to the team would automatically get all of the permissions that they needed.

Then came the heady world of Dynamics 365 being online! Well, nothing much changed really, at least not for a little while.

But then, things really did change, in May 2019. Functionality for security teams within Dynamics 365 was increased. Notably, there was now something called a ‘AAD Security Group Team’:

So what was this magical new item?

When we create a team, and we set the Team Type to ‘AAD Security Group’, we’re now able to set an AAD Object ID. In fact, it’s required! After we’ve created this object within Dynamics 365, we can then apply security role/s to it directly (as we could to any other team records beforehand):

Let’s take a moment to reflect & think on this. Until now, we’ve had to handle security directly within Dynamics 365. Now, we have the ability to have an Azure Active Directory (for that is what AAD stands for) group, and reference it within Dynamics 365.

Suddenly new possibilities open up. As part of the on-boarding process (for example) we can users to specific AAD security groups, which will then give them access with appropriate permissions within Dynamics 365. We’re also able to have multiple AAD groups, each inheriting a different set of Dynamics 365 roles, and thereby create a multi-layering approach to different business & security needs.

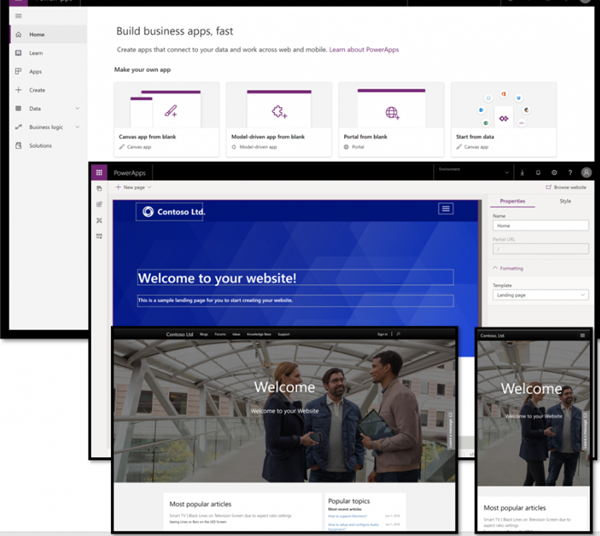

We’re also able to use tools such as PowerShell, LogicApps, Power Apps & Power Automate to carry out automation around this. There’s an Azure AD connector (https://docs.microsoft.com/en-us/connectors/azuread/) which gives the ability to set up & administer these.

We’re actually using this functionality now in some of our COVID-19 response apps. Instead of needing our own support desk to manage the (external) users, we’ve provided an interface where client IT departments can quickly log in, upload a list of users, and assign them to the relevant AAD group/s. It’s very quick, and allows the users to onboard to the Power Apps within minutes!

So with knowing this, how do you feel it might help benefit you? Comment below – I’d love to hear!